AI is Not Software, It's Industrial Fuel: Architecting for the New Economy

The industry is currently operating under a massive, expensive delusion. We are treating Artificial Intelligence like traditional SaaS software. You buy a license, you make an API call, and it just works, right?

Wrong.

If you treat autonomous AI agents like software, you will go bankrupt. True, autonomous AI is not software. It is Industrial Fuel.

When you run a heavy industrial process, you don’t just “buy a factory license.” You pay for the fuel that powers the combustion engine. Every token generated, every context window expanded, and every complex reasoning step taken is a gallon of fuel burned. If your engine is inefficient, or if you use high-octane rocket fuel to run a simple conveyor belt, your operational costs will rapidly outpace your revenue.

Welcome to the new economy. If you want to survive, you need to stop acting like a software consumer and start acting like a Chief Engineer of a High-Tech Refinery.

The Illusion of AI as Software

In the traditional software paradigm, a poorly optimized SQL query might slow down a page load by a few milliseconds. In the AI paradigm, a poorly optimized autonomous agent loop might recursively call a premium reasoning model (like GPT-4 or Grok 4) thousands of times, generating a massive infrastructure liability overnight.

I recently evaluated my own operational costs and realized I was burning roughly €2,000 a month on AI API costs. Why? Because I was building complex, autonomous systems that did real, heavy lifting.

This isn’t a “personal expense.” This is the raw material cost of the new economy. It is an Infrastructure Liability. If you are building AI solutions for clients, you cannot absorb these costs. You must bill them as “Fuel,” and more importantly, you must architect systems that use that fuel as efficiently as possible.

Architecting the Data Refinery

How do we build engines that don’t burn the bank account? By constructing a robust refinery that routes tasks based on computational cost and complexity.

In a traditional setup, developers send every single request to the most expensive, capable model they can find. This is equivalent to fueling a forklift with jet fuel.

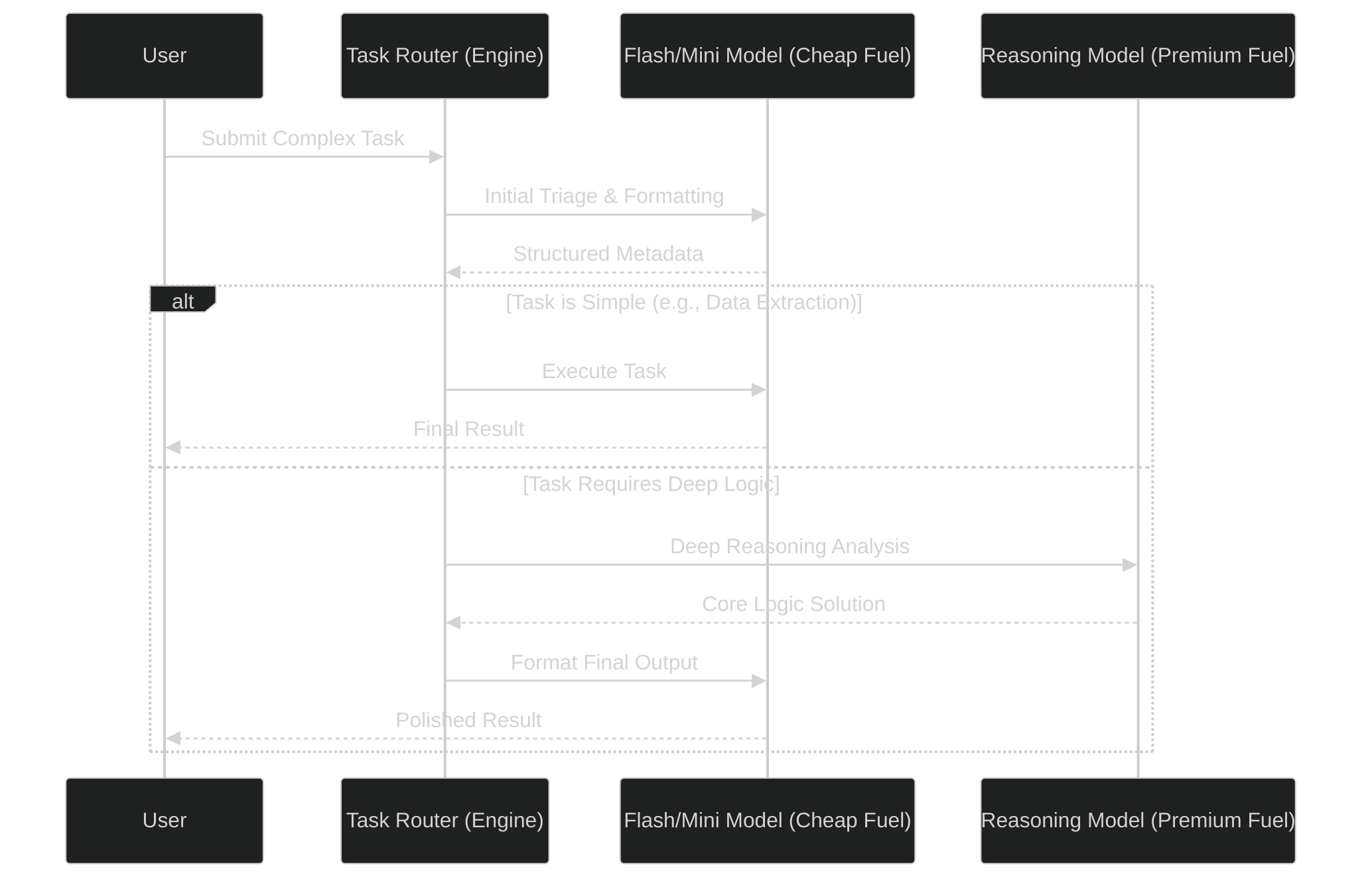

In a high-tech refinery, we use a tiered approach. We utilize fast, inexpensive models (like Gemini Flash or Grok Mini) for triage, routing, and formatting. We only ignite the premium reasoning models when deep, complex logic is absolutely required.

The Engine Block: Durable Agent Loops

To safely contain this combustion, you need a heavy-duty engine block. You cannot rely on fragile, ephemeral scripts. If your script crashes halfway through a multi-step reasoning task, you lose the progress, but you still pay for the fuel burned.

This is where durable execution frameworks (like Restate) and strict data validation (like Pydantic) become non-negotiable.

Here is a high-level abstraction of how a durable, fuel-efficient agent loop operates:

By enforcing strict schemas and routing dynamically, we ensure that every unit of computational fuel is spent precisely where it yields the highest return on investment.

The Business Bottom Line

Moving from an ad-hoc “garbage pile” of API calls to a clean, highly structured “Data Stream” is the only path forward.

We must adopt an API-first accounting mentality not just for our finances, but for our compute architecture. When you build durable engines with intelligent routing and robust state management, you aren’t just writing code. You are establishing Systemic Integrity.

You are building a safe harbor in a volatile digital economy. Stop selling software. Start refining fuel.